White House officials are working on a new AI policy memo that could reshape how U.S. security agencies use AI tools. The draft, still under review, sets rules for how AI firms work with the military and may help ease tensions between the Pentagon and Anthropic.

Claim 55% Off TipRanks

- Unlock hedge fund-level data and powerful investing tools for smarter, sharper decisions

- Discover top-performing stock ideas and upgrade to a portfolio of market leaders with Smart Investor Picks

The memo has been in progress for months and is set to replace an earlier Biden-era policy. While it does not target any one firm, its timing is key as the Pentagon and Anthropic remain in a public dispute over how the company’s AI can be used in defense work.

Push for Multiple AI Vendors

At the core of the memo is a push to avoid reliance on a single AI provider. Officials want agencies to work with several firms to reduce risk and improve supply stability. This could open the door for more companies like Alphabet (GOOGL) to expand their role in defense AI.

At the same time, the draft sets limits on how AI firms engage with the military. Companies would need to respect the chain of command, where the president has final say. However, the memo stops short of forcing firms to accept all possible uses of their tech, which has been a key sticking point for Anthropic.

A senior White House official said the goal is not to resolve the dispute directly. Instead, the plan is to bring in more vendors so that “when” Anthropic is removed, the U.S. still has the tools it needs.

Balancing Control and Safeguards

The memo also tries to address concerns from both sides. For the Pentagon, AI systems used in defense must not be changed without approval and remain free from bias. For firms like Anthropic, it adds contract terms to protect against misuse, including limits on unauthorized surveillance.

In addition, the policy calls for regular updates to rules on autonomous weapons, which remain a fast-moving area in defense tech.

These steps could give the Pentagon room to keep Anthropic’s tools in use while still raising concerns. Anthropic’s Claude Gov system is part of Palantir Technologies Inc. (PLTR) platforms used in active operations, which makes a full break harder in the near term.

Still, legal risks remain. Courts have so far allowed the Pentagon’s supply-risk label to stand, even as a broader ban on Anthropic’s tech is paused.

Outlook Remains Unclear

Looking ahead, the path is still uncertain. The White House is also focused on gaining access to Anthropic’s new Mythos model, which has shown a strong ability to find cyber flaws. Use of that tool is still limited, though officials are working on safeguards to expand access.

Meanwhile, recent talks suggest a softer tone. After meetings with Anthropic leaders, President Donald Trump said, “I think we’ll get along with them just fine.”

Overall, the draft memo signals a shift toward a broader AI strategy, one that spreads risk, keeps control in government hands, and leaves room for major tech firms to play a larger role in national security.

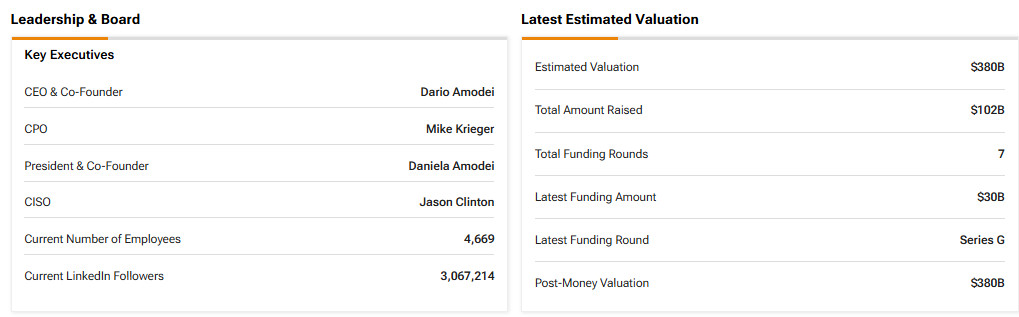

Although OpenAI remains a private company, investors can still monitor its performance and key developments through TipRanks’ Private Companies Center. Below is a screenshot for reference.